Blog

Fine-tuning a small language model to support teachers and foundational literacy in Uganda

11 May 2026

AI-for-Education.org's benchmarks team, Crane AI Labs

The true potential of AI in classrooms across low- and middle-income countries (LMICs) will only be realised by meeting users where they are, in the language they speak, and on the devices they already own.

While massive cloud-based models dominate the headlines, small language models (SLMs) offer a more practical solution for resource-constrained environments. Over the past six months, in collaboration with Crane AI Labs, we set out to discover what it takes to build a working SLM that runs entirely on a low-cost Android phone to assist teachers in their daily tasks in Uganda. Spoiler – it's hard work but we made big improvements!

Our main achievements include:

- Curating Ugandan training data: Assembled localised and bilingual educational items adapted to foundational literacy in Uganda.

- Creating a custom benchmark suite to define what good looks like: Built two specialised evaluation benchmarks to test both pedagogical knowledge adapted to the Ugandan context and Luganda linguistic understanding.

- Fine-tuning a mobile-ready SLM: We fine-tuned EduGanda-Gemma-3-1B, a model that works completely offline in Luganda to support teachers in their daily tasks.

Here is a look at how we did it, our key findings, and the challenges we faced along the way. While the model isn’t quite ready for deployment yet, these results show strong progress toward a fully offline, teacher‑supporting solution.

Results and learning

While around ten million people speak Luganda, AI support for the language is limited. In Uganda, the national curriculum requires instruction in local languages for grades P1–P3 but structured Luganda materials are scarce and teachers usually plan lessons manually. Interviews with 10 teachers in Kampala confirmed that this lesson preparation remains their primary pain point.

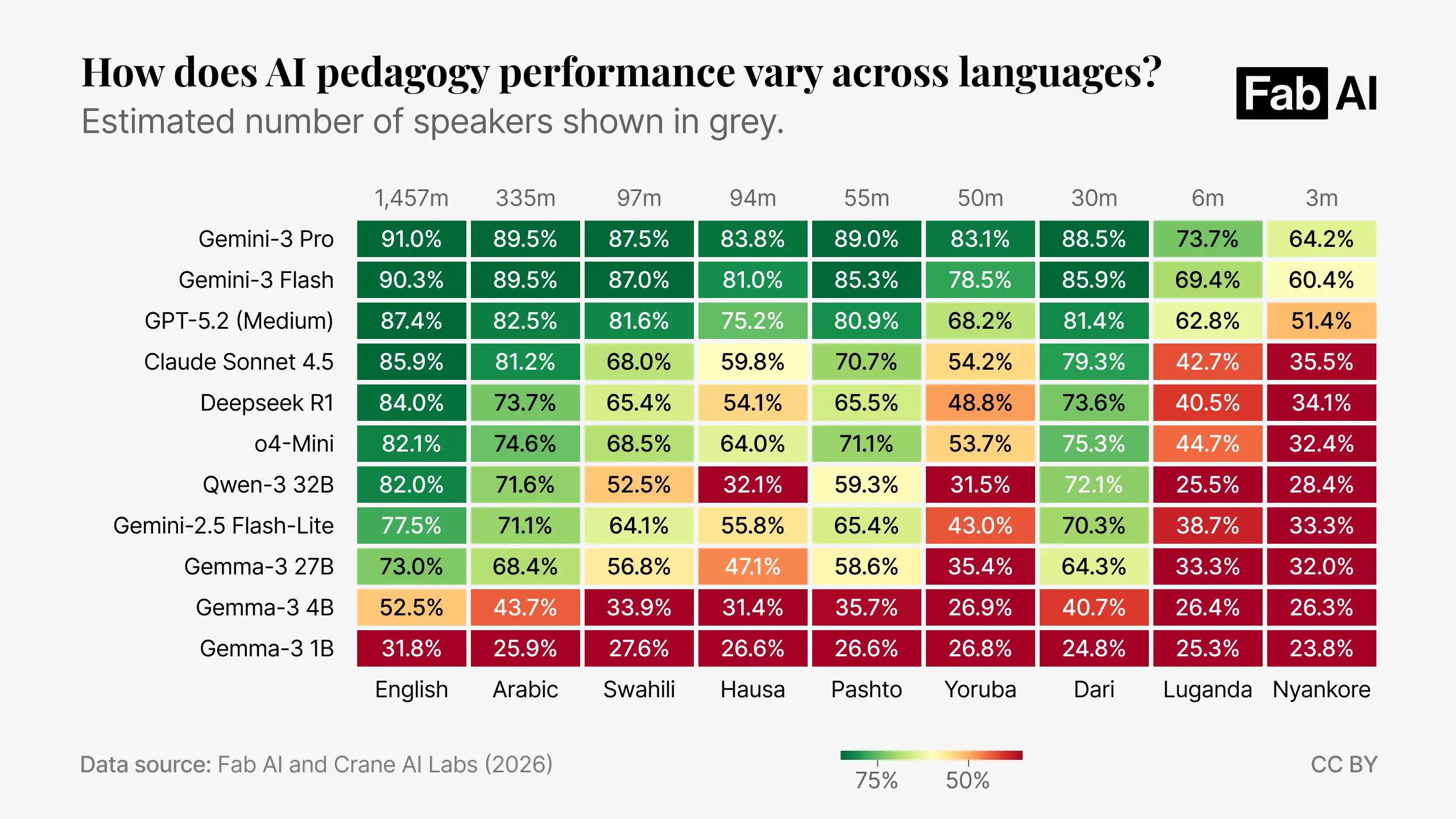

Additionally, the results from our multilingual Pedagogy Benchmark revealed a significant ‘language gap’ in AI capabilities for lower resource languages such as Luganda. Almost all models, particularly smaller ones, showed massive drops in accuracy when evaluated on our translated Pedagogy Benchmark.

We set out to fine-tune a 1-billion parameter model (Gemma-3 1B) for an educational task for teachers in Uganda.

Following the curation of localised training materials and the development of a bilingual benchmark evaluation suite, we explored several fine-tuning strategies to optimise the model performance. Our primary objective was to enhance both pedagogical accuracy and Luganda linguistic fluency within a constrained parameter budget.

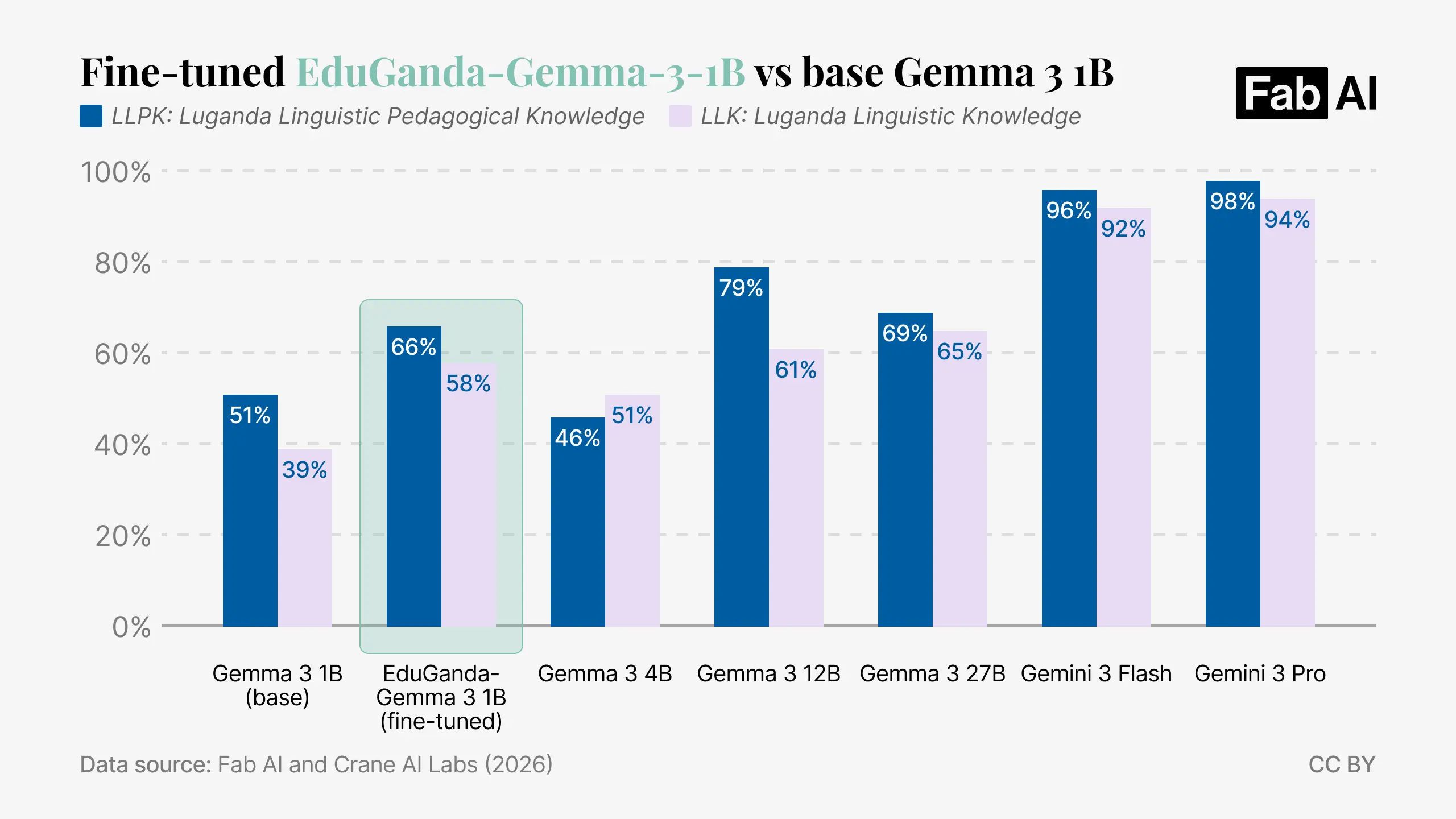

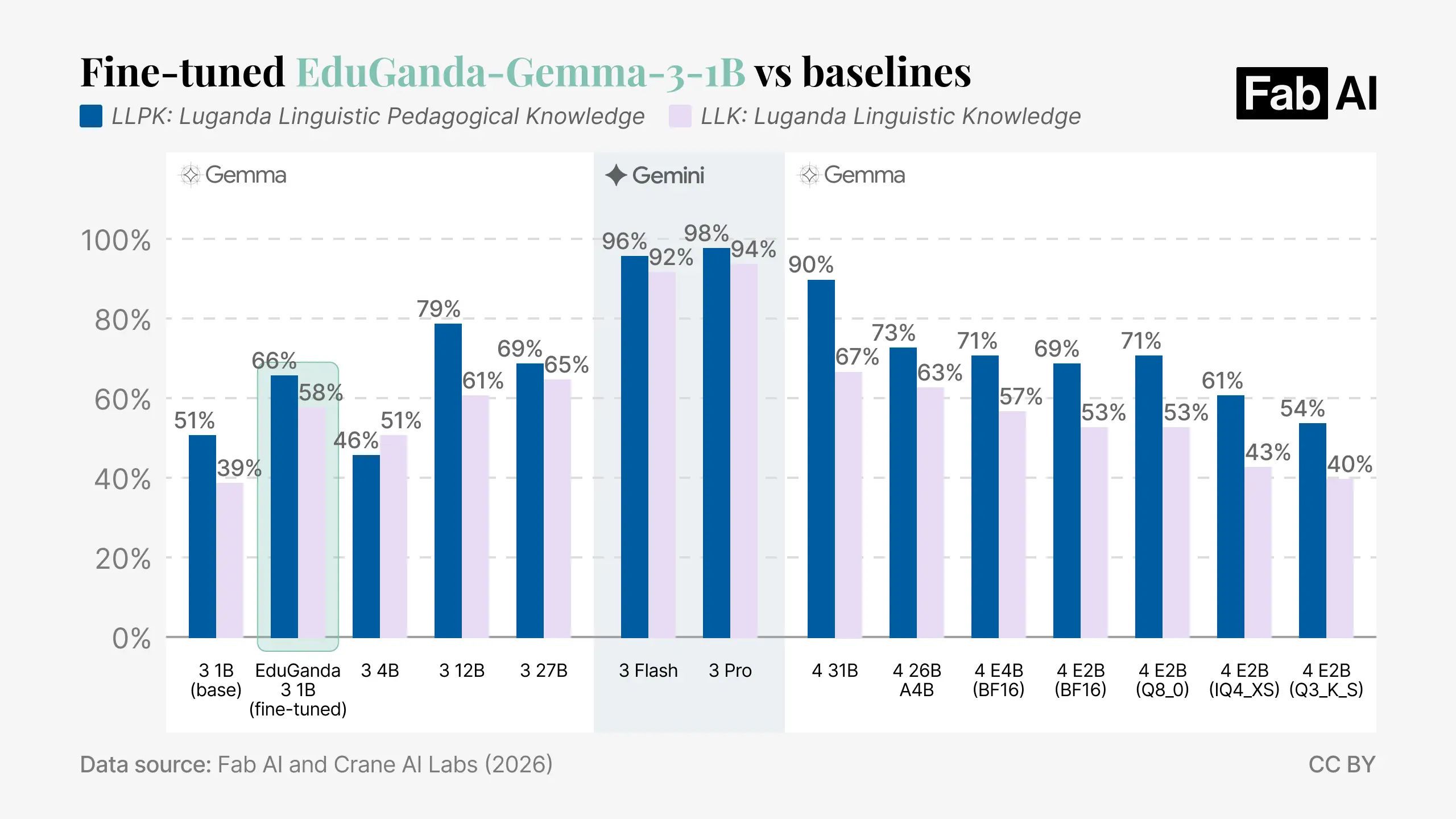

Our resulting model, EduGanda-Gemma-3-1B, demonstrated substantial performance gains over the baseline. Specifically, EduGanda-Gemma-3-1B achieved a score of 66% on the Luganda Linguistic Pedagogical Knowledge (LLPK) benchmark and 58.8% on the Luganda Linguistic Knowledge (LLK benchmark)*, and outperformed a model four times bigger (Gemma 3 4B). For comparison, the unmodified base model scored 51% and 39% on these respective measures. While a 12-billion parameter model reached 79% and 61%, it lacks the efficiency required for the offline mobile deployment that EduGanda-Gemma-3-1B provides.

*Both evaluated in Luganda but keeping the instructions in English to make sure all models understand the task at hand equally.

The 12B parameter model typically exceeds the storage and RAM capacity of the entry-level smartphones common in regions like Uganda. In terms of storage in particular, the 12B parameter model requires 12.5 GB of storage (in its Q8_0 quantisation ), whilst entry-level devices typically have 32 GB to 64 GB of total storage, causing the larger model to either fail to load or consume all available user space.

In contrast, the Gemma 3 1B model uses only 1.07 GB of storage (in Q8_0 quantisation), which can easily run on standard mobile hardware without crashing the system. Additionally, the 1 GB size makes it accessible to download; using a local MTN data bundle*, the download costs approximately 1,000 UGX ($0.27 USD).

Our work with the EduGanda-Gemma-3-1B model provided several insights into the behaviour of Small Language Models (SLMs) in low-resource environments.

*Using here Uganda data bundle of 2GB for 2000 UGX

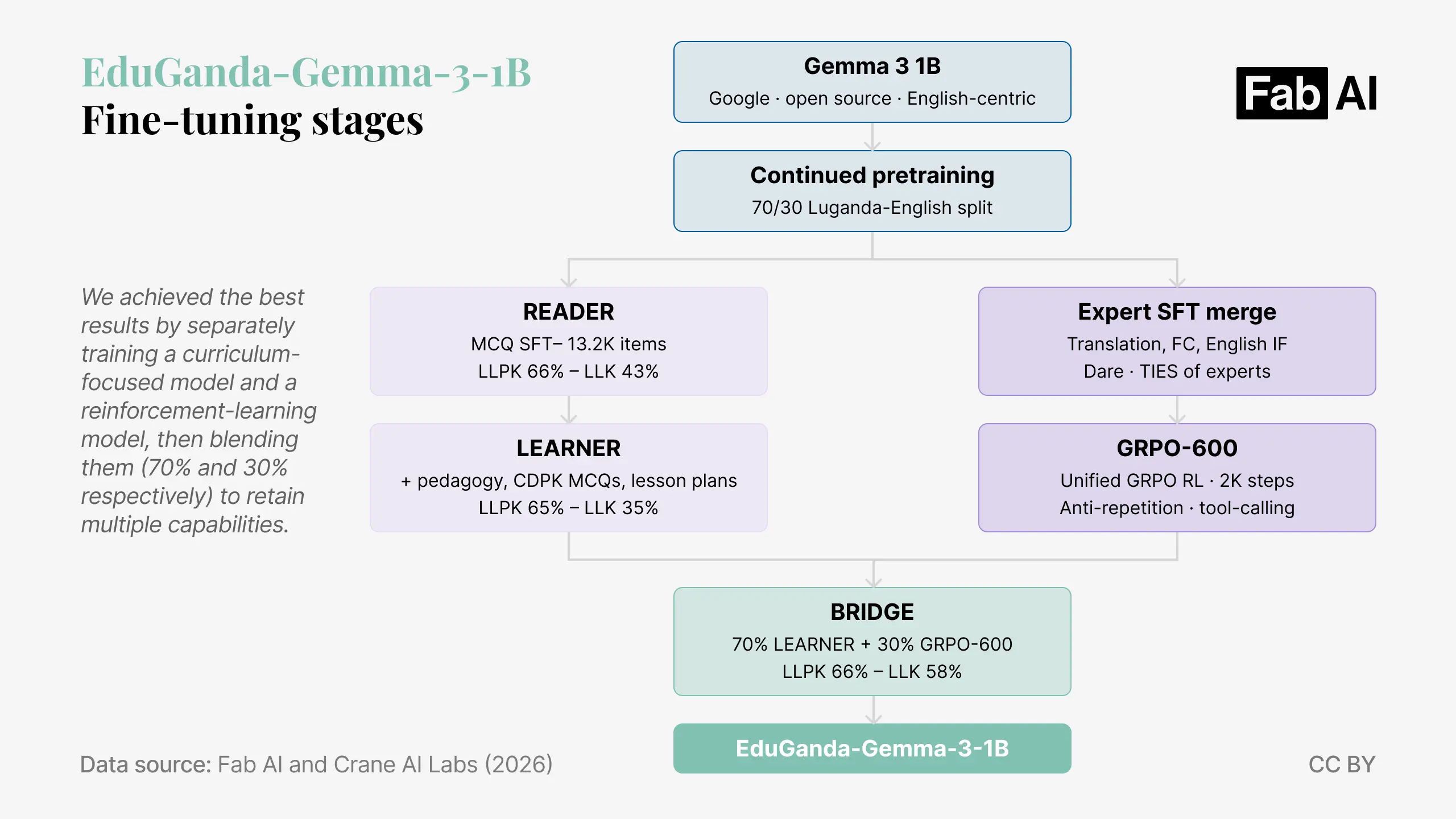

1. Managing catastrophic forgetting

We found that the 1B parameter model is highly sensitive to ‘catastrophic forgetting’. Because the model has limited capacity, training it on a new skill – such as answering to MCQs – often caused it to erase previously learned abilities – such as generating exercises. To solve this, we found that model blending was more effective than simply mixing all training data into one set. We achieved the best results by separately training a curriculum-focused model and a reinforcement-learning model, then blending them (70% and 30% respectively) to retain multiple capabilities (see diagram in Methods section).

2. Prioritising data quality over volume

Our experiments confirmed that high-quality, curated data is much more valuable than large amounts of noisy data. We tested the hypotheses by training a second model on 1.53 million machine-translated sentence pairs, which was 46 times more data than our custom set. The model performance got significantly worse because of translation errors and ‘noise’ in the machine-translated text. In the end, our 35,000 carefully curated and culturally adapted pairs performed much better than the 1.53 million noisy ones.

3. Solving specific fine-tuning challenges

Training a model for a specific language and task created unique technical problems that required targeted strategies:

- Vocabulary adaptation: We learned that using a much lower learning rate for the vocabulary layer was essential; without this, the model could learn individual words but struggled to build sentences.

- Bias correction: The model originally developed an ‘answer-position bias,’ where it would guess ‘B’ for almost every multiple-choice question regardless of the content. We fixed this by balancing the training dataset with English examples.

- Repetition control: We initially tried reinforcement learning (RL) to stop the model from repeating the same Luganda phrases. However, we discovered that a simple repetition penalty setting during inference worked even better. This eliminated text loops with zero cost to accuracy, actually outperforming our more complex RL efforts.

Methods: Datasets and benchmarks

Datasets

To address the lack of digital pedagogical content for Luganda – a low-resource Bantu language – we developed a custom workflow to curate a high-quality training corpus through the following four stages.

Our process began with automated document parsing, where we utilised a Docling pipeline to OCR-process physical foundational texts, including ‘A Handbook of Luganda’ (Pilkington’s 1915) and the 2023 Uganda Curriculum Guide, extracting structured text from scanned paper documents. We then implemented an eight-stage bilingual exercise pipeline that processed 5.4 GB of educational PDFs to generate 3,472 bilingual exercises across domains like phonological awareness, comprehension, vocabulary, and grammar.

To fill critical gaps in structured data, we employed synthetic data generation via Gemini 2.5 Flash to create culturally grounded training materials. This went beyond simple translation by injecting real Luganda grammar patterns and local contexts – such as Ugandan names, currency, and market scenarios – to ensure the model learned authentic linguistic nuances. Finally, we performed cultural and linguistic validation to refine the dataset with Luganda-specific phonics rules, such as L/R alternation (in Luganda, 'l' and 'r' are allophones of a single sound, with 'r' typically appearing after front vowels and 'l' elsewhere), and complex noun-class agreement systems where the class of the noun (e.g. a person vs a tree) determines the prefixes that appear on adjectives, verbs, and numerals throughout the sentence. The process concluded with a final verification stage to ensure the dataset’s total accuracy and linguistic authenticity.

Benchmarks

The Luganda Linguistic Pedagogical Knowledge (LLPK) Benchmark was created to measure whether a model understands how to teach foundational literacy specifically within the Ugandan context, by using a hybrid approach of LLM generation and human review. The first stage involved synthesising the global reading science (including the GEEAP report) to establish a taxonomy of domains to evaluate (systematic phonics, oral language, writing, ready comprehension and fluency, phonological awareness, ...). To ground this theoretical framework in Ugandan classroom practice, the generation process integrated the Ministry of Education P1 Luganda Teacher’s Guide and to support orthographic and morphological precision, the pipeline drew on Pilkington’s 1915 A Handbook of Luganda as a foundational reference grammar. All these elements were then used with specific instructions to generate the benchmark with Gemini 2.5 Flash. The automated pipeline concluded with a two-stage manual validation process with an education expert first and native Luganda experts second.

The Luganda Linguistic Knowledge (LLK) Benchmark was created to test if the model actually knows the Luganda language rules it is supposed to teach. We structured this benchmark using the Common European Framework of Reference for Languages (CEFR)*, with 75% of the questions covering foundational levels (A1-B1) to ensure the model has perfect recall of the basic building blocks used in Grade 1-2 instruction. The questions are heavily weighted toward Morphology and Concord (30%) and Syntax (25%) because Luganda uses a complex system of 12 noun classes where every prefix must agree. We also included ‘stress tests’ at the C1-C2 levels to ensure the model understands deep cultural context and advanced grammar, which is necessary for a tool acting as a teacher's assistant. This benchmark ensures the model does not ‘hallucinate’ spellings or grammar, which would make its lesson plans unusable for students.

*International standard for assessing language proficiency across six levels, from A1 (beginner) to C2 (mastery), defining skills in reading, writing, listening, and speaking.

Methods: Model fine-tuning

The different trials

The development of the EduGanda-Gemma-3-1B model was guided by the technical constraints of a basic Android phone with no internet. We chose a 1-billion parameter model because it is small enough (978 MB in memory) to fit on these devices and offers efficient performance. During our experiments, we trained six different versions of the model and learned that a small model cannot hold all skills at once. Often, adding training data for a new skill like content generation would break another skill like question answering. We also found that mixing all training data together did not work well; instead, merging two separately trained models was the only way to retain multiple capabilities without one skill erasing another.

The successful training

Our final training process used five steps to solve specific performance problems. First, we adapted the model to Luganda using a very slow learning rate for the vocabulary layer so it could build full sentences instead of just words. We also corrected an ‘answer-position bias’ where the model always chose ‘B’ by training it on balanced English questions. The core training used 13,200 Luganda curriculum items, including lesson plans and bilingual exercises. To stop the model from repeating the same words, we used a repetition penalty at inference time, which worked better than reinforcement learning and had no cost to accuracy. The final model is deployed via the LiteRT mobile runtime and can generate 12–18 tokens per second on mid-range hardware with no network dependency (this equates to around 20-30 seconds for a 300 word paragraph).

Open source and next steps

We are committed to supporting the broader ecosystem by making every artefact from this project publicly available. Our open-source release includes the fine-tuned EduGanda-Gemma-3-1B model, 3,472 bilingual literacy exercises, the full FLN training dataset, and the bilingual benchmarks. All outputs are accessible via Crane AI Lab’s Hugging Face and the Fab AI GitHub.

The recent release of Gemma 4 shows great potential, as early testing shows higher baseline scores and efficient quantisation levels. For low-end Android phones, the Gemma 4 E2B model is particularly promising as it occupies less than 3 GB of storage in its most quantised versions (see last two models).

Related resources

The big AI labs claim that their models can handle more and more languages, but how well can they actually support teaching in those languages?

We built the Pedagogy Benchmark to fill a critical gap in assessing models' understanding of pedagogy. This paper details how we built it.

As AI use for education proliferates, our priority is to ensure that AI tools are high quality. This means thinking about evidence and, in AI, about 'benchmarks'.

Learn More

See our benchmarks leaderboard for up-to-date model performance against our AI for education benchmarks for LMICs.

See the leaderboard