Research Paper

Talking teachers' language: Testing multilingual pedagogy ability of AI

12 January 2026

AI-for-Education.org's benchmarks team, EdTech Hub's AI Observatory, UK International Development

The big AI labs are claiming that their models can handle more and more languages, but how well can they actually support teaching in those languages, and understand key pedagogical concepts?

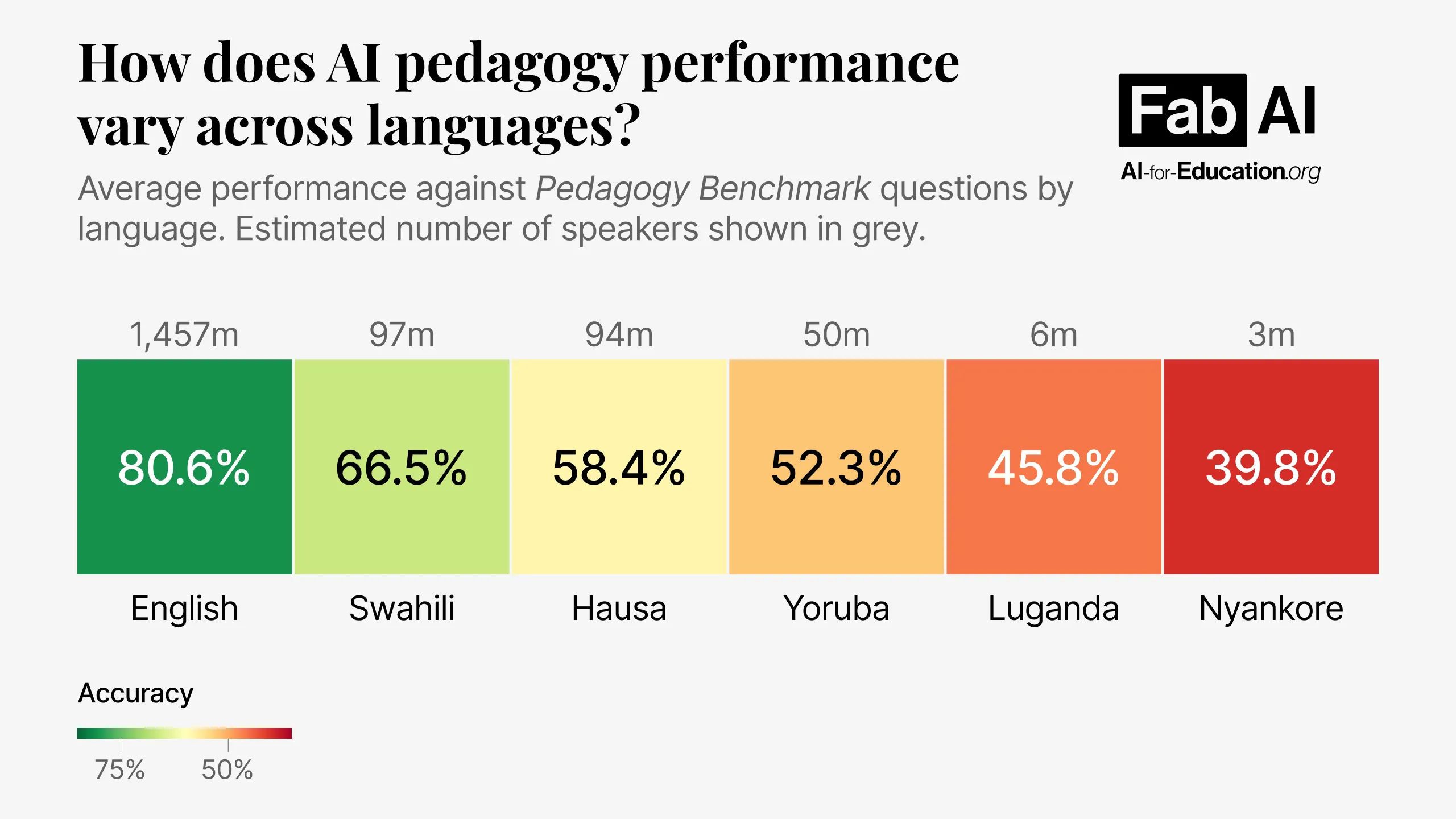

We translated all questions from our Pedagogy Benchmark – the world’s first AI Benchmark testing core teaching concepts – into African languages using a combination of machine translation and human translation in collaboration with Crane AI Labs. We have started off with five African languages, with the choice of languages intending to represent a range in the number of speakers.

Download the full paper below to see what we've found as we begin to look into how performance varies:

- across languages, and how this changes across the more widely spoken African languages such as Swahili, as well as lower resource languages such as Nyankore;

- across models, and how this differs for the flagship 'frontier' models to the smaller, cheaper 'edge' models;

- in the reasoning effort used by models in different languages;

- when using machine translations or human translations;

- in terms of latency (speed of response) as well as accuracy; and

- when the instructions (system prompt) are in English or also translated.

Learn More

See our benchmarks leaderboard for up-to-date model performance against our AI for education benchmarks for LMICs.

See the leaderboard